Discover

cnvrg.io

Metacloud

We partner with cnvrg.io

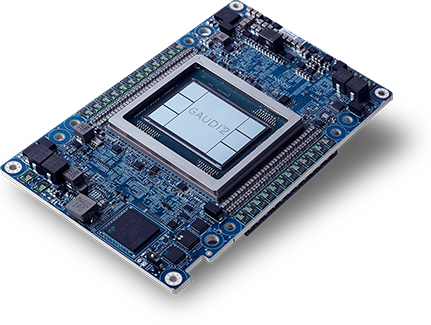

Developers can leverage the efficiency of Intel Gaudi processors on the cnvrg.io platform to build frictionless machine learning pipelines in just a few clicks.

End-to-end development:

Developers can instantly build production-ready pipelines with custom or pre-built ML components.

Portable

cnvrg’s MLOPs and container-based infrastructure simplifies engineering of heavy and complex tasks.

Optimization

Enables developers to orchestrate any ML task to run on any AI stack to maximize performance and increase efficiency.

Optimize AI workflows with Gaudi’s price performance and Metacloud’s flexibility.

With convrg.io’s Metacloud AI developers have the flexibility to run end-to-end AI flows on the mix of cloud or on-premises compute resources or storage they choose.

On-premises

operators have the benefit of dynamically expanding their AI processing capability on an as-needed basis with Metacloud’s cloud-bursting onto Amazon EC2 DL1 instances powered by Intel Gaudi accelerators. Amazon instances based on Intel Gaudi accelerators deliver up to 40% better

price performance than existing GPU-based EC2 instances, enabling customers to train more, while spending less. With the combination of Intel Gaudi accelerators on Metacloud, AI workloads are:

Flexible and seamless

enabling developers to choose the mix infrastructure and hardware desired to optimize capacity or cost—even within the same AI/ML workflow or pipeline

Easily deployed and managed

Metacloud provides a developer-friendly portal to set-up and launch AI/ML workflows using the developer’s choice of hardware

Dynamic and fast

developers can manage data and develop, train and deploy models on a mix of infrastructure instantly

For more information on how developers can leverage the price/performance of Intel Gaudi accelerators with the ease, flexibility and expandability of cnvrg.io Metacloud, read our blog >

For a deep-dive, how to implement AI training with Intel Gaudi accelerators on the cnvrg.io platform, watch here >