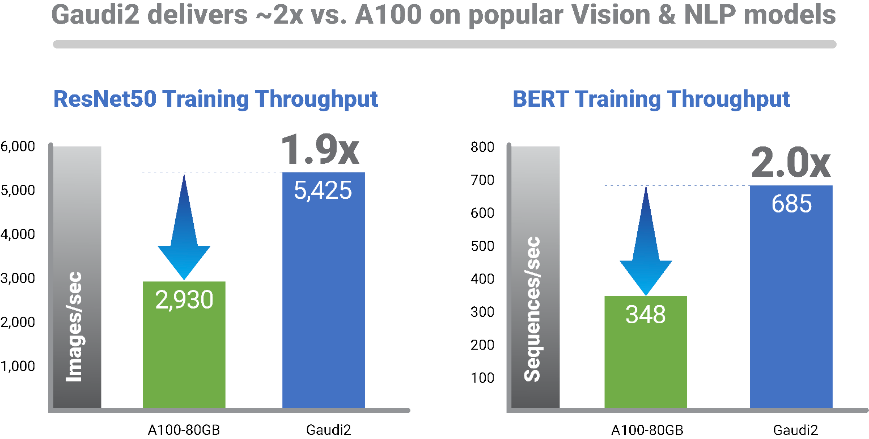

Habana® Gaudi®2 demonstrates 2x throughput over Nvidia’s A100 GPU at Intel Vision

Dateline: DALLAS and TEL AVIV, ISRAEL – May 10, 2022 – Today at the Intel Vision conference, Habana Labs, an Intel company, announced its second-generation deep learning processors, the Habana® Gaudi®2 Training and Habana® Greco™ Inference processors. The processors are purpose-built for AI deep learning applications, implemented in 7nm technology and build upon Habana’s high-efficiency architecture to provide customers with higher-performance model training and inferencing for computer vision and natural language applications in the data center. At Intel Vision, Habana Labs revealed Gaudi2’s training throughput performance for the ResNet-50 computer vision model and the BERT natural language processing model delivers twice the training throughput over the Nvidia A100-80GB GPU.

“The launch of Habana’s new deep learning processors is a prime example of Intel executing on its AI strategy to give customers a wide array of solution choices – from cloud to edge – addressing the growing number and complex nature of AI workloads. Gaudi2 can help Intel customers train increasingly large and complex deep learning workloads with speed and efficiency, and we’re anticipating the inference efficiencies that Greco will bring.”

Sandra Rivera, Intel executive vice president and general manager of the Datacenter and AI Group

Habana® Gaudi®2 Training Processor

Habana Gaudi2 significantly increases training performance, building on the same high-efficiency first-generation architecture that enables customers with up to 40% better price performance in the AWS cloud with Amazon EC2 DL1 Instances and on-premises with the Supermicro X12 Gaudi Training Server. In addition to its 7nm leap from 16nm, Gaudi2 also features 24 Tensor Processor Cores, an increase from eight cores in the first Gaudi and designed expressly for large deep learning workloads. The new Gaudi2 AI training processor integrate media processing on-chip, triple on-board memory to 96 GB and double SRAM to 48 MB. These contribute to Gaudi2’s performance – up to three times the training throughput over first-generation Gaudi.

“Compared with the A100 GPU, implemented in the same process node and roughly the same die size, Gaudi2 delivers clear leadership training performance as demonstrated with apples-to-apples comparison on key workloads,” said Eitan Medina, chief operating officer at Habana Labs. “This deep-learning acceleration architecture is fundamentally more efficient and backed with a strong roadmap.”

Customer Benefits

Gaudi2 gives customers a high-performance deep learning training processor alternative for computer vision workloads, such as object detection in autonomous vehicles, object detection in medical imaging, and defect detection in manufacturing. It enables customers to train natural language processing workloads like subject matter analysis to identify fraud detection in sensitive documents such as insurance claims and grant submissions.

Networking Capacity, Flexibility, Efficiency

Habana has made it cost-effective and easy for customers to scale out training capacity by amplifying training bandwidth on Gaudi2. With the integration of 24 100 Gigabit RDMA over Converged Ethernet (RoCE) ports on chip, customers can easily scale and configure Gaudi2 systems to suit their deep learning cluster requirements. With system implementation on widely used industry-standard Ethernet connectivity, Gaudi2 enables customers to choose from a wide array of Ethernet switching and related networking equipment, resulting in overall cost savings. Avoiding proprietary interconnect technologies in the data center is important for IT decision makers who want to avoid single vendor “lock-in.” The on-chip integration of the networking interface controller (NIC) ports also lowers component costs.

Habana provides customers with the HLS-Gaudi®2 server, featuring eight Gaudi2 processors and dual-socket Intel® Xeon® Ice Lake CPUs. One thousand Gaudi2 processors have been deployed in the Habana Gaudi2 data center in Haifa, Israel to support research and development for Gaudi2 software optimization and to inform further advancements in forthcoming solutions.

Simplified model build and migration

The Habana SynapseAI® Software Suite is designed for deep learning model development and to ease migration of existing GPU-based models to Gaudi platform hardware. The SynapseAI stack features integration of TensorFlow and PyTorch frameworks and 30+ computer vision and natural language processing models. Developers are supported with documentation and tools, how-to content, a community support forum on the Habana Developer Site, and with reference models and model roadmap on the Habana GitHub.

Availability of Gaudi2 training solutions

Habana is partnering with Supermicro to bring the Supermicro Gaudi®2 Training Server to market in 2H 2022. Habana is also teamed with DDN® to deliver a turnkey server featuring the Supermicro Gaudi server with augmented AI storage capacity with the pairing of the DDN AI400X2 storage solution.

For more information regarding the Gaudi2 processor, please see the Gaudi2 Whitepaper.

To learn more about how Gaudi2 can bring performance and efficiency advancements to your company, contact us.

Gaudi2 performance metrics are based on the Habana® HLS-Gaudi®2 Server. For more information on performance metrics and comparisons, please see our Claims Validation page.

Partner and customer endorsements of Gaudi2 processors

Mobileye

“As a world leader in automotive and driving assistance systems, training cutting edge Deep Learning models for tasks such as object detection and segmentation that enable vehicles to sense and understand its surrounding is mission-critical to Mobileye business and vision. As training such models is time consuming and costly, multiple teams across Mobileye have chosen to use Gaudi-accelerated training machines, either on Amazon EC2 DL1 instances or on-prem. Those teams constantly see significant cost-savings relative to existing GPU-based instances across model types, enabling them to achieve much better Time-To-Market for existing models or training much larger and complex models aimed at exploiting the advantages of the Gaudi architecture,” said Gaby Hayon, executive vice president of R&D at Mobileye. “We’re excited to see Gaudi2’s leap in performance, as our industry depends on the ability to push the boundaries with large-scale high performance deep learning training accelerators.”

Leidos

“The rapid-pace R&D required to tame COVID demonstrates an urgent need our medical and health sciences customers have for fast, efficient deep learning training of medical imaging data sets–when hours and even minutes count—to unlock disease causes and cures. We expect Gaudi2, building on the speed and cost-efficiency of Gaudi, to provide customers with dramatically accelerated model training, while preserving the DL efficiency we experienced with first-gen Gaudi.” Chetan Paul, CTO Health and Human Services at Leidos.

Supermicro

“We are excited to bring our next-generation AI deep learning server to market, featuring the high-performance Gaudi2 accelerators enabling our customers to achieve faster time-to-train, efficient scaling with industry-standard Ethernet connectivity, and improved TCO,” said Charles Liang, president, and CEO, Supermicro. “We are committed to collaborating with Intel and Habana to deliver leadership AI solutions optimized for deep-learning in the cloud and data-center”.

DDN

“We congratulate Habana on the launch of its new high-performance, 7nm Gaudi2 accelerator. We look forward to collaborating on the turnkey AI solution consisting of our DDN AI400X2 storage appliance combined with Supermicro Gaudi®2 Training Servers to help enterprises with large, complex deep learning workloads unlock meaningful business value with simple but powerful storage,” said Paul Bloch, president and co-founder of DataDirect Networks.

Habana® Greco™ Inference Processor

In addition to the new Gaudi2 Training processor, Habana introduced its newest entry into the inference market with the new Greco Inference processor, which is slated for customer sampling in 2H 2022 and mass production in Q1 2023. Greco will provide performance and power efficiencies achieved with the leap from 16nm to 7nm process. To enable greater inference speed and efficiency targeting computer vision deployments, Greco integrates media encoding and processing on-chip, supporting media formats HEVC, H.264, JPEG and P-JPEG. In addition, Greco will support multiple data types, Bfloat 16, FP16, INT8/UINT8, INT4/UINT4, giving customers options and flexibility in balancing inference speed and accuracy.

Greco will be available in a new form factor—reduced from the Goya™ dual-slot PCIe card to single-slot, half-height, half-length (HHHL) PCIe Gen 4 x 8 form factor, packing the performance of the full PCIe card format into the compact HHHL to deliver inference improved compute density and system design efficiency and flexibility.

Through architectural, processor technology and form factor advances, the Greco processor significantly reduces power from 200W TDP Goya to 75W TDP, lowering the cost of customer operations for inference deployments.

Development and deployment of computer vision and NLP inference workloads on the Greco processor are supported by the Habana SynapseAI Software Suite, the Habana Developer Site and Habana GitHub, with integration of TensorFlow and PyTorch frameworks.

For more information on the Habana Greco, contact us.

About Habana Labs

Habana Labs, an Intel company, is a leading AI Processor company founded in 2016 to develop purpose-built processor platforms optimized for training deep neural networks and for inference deployment in production environments. We are unlocking the true potential of AI with platforms offering orders of magnitude improvements in processing performance, scalability, cost, and power consumption. Acquired by Intel, Inc. in 2019, Habana operates as an independent business unit within the Intel Data Products Group.